by Steve Meurer, PhD, MBA, MHS

Executive Principal, Data Science and Member Insights

In presentations to hospital leaders detailing performance improvement opportunities uncovered by analysis of the Vizient clinical benchmarking data, it’s not uncommon for them to first look for reasons for below-average performance when compared to peers rather than consider what changes could be implemented to improve.

This happened recently after presenting a length-of-stay (LOS) opportunity to leaders of a large hospital. The response from someone who had drilled into the data was that the issue was due to their six LOS outliers: those patients with a LOS at the 99th percentile in that clinical condition. After showing this group that a reduction in LOS opportunity still existed with those outliers excluded, another leader responded that it must then be due to the number of obese patients they treat. No comments were made during the discussion about any possible changes that the hospital could make to reduce LOS.

This conversation brought me back to a comment made by Don Berwick, the quintessential health care quality guru of our time, who noted that the biggest issue we have in the American health care system is our inability to improve.

Too often I see an incessant search for more data or justifications to refute analysis that shows areas of opportunity for improvement. Coding and documentation, as well as factors outside of the hospital’s control – like patient mix – are the two most-cited justifications given for discounting analysis that shows an opportunity to improve risk-adjusted mortality.

With respect to coding and documentation, most risk-adjustment methodologies are based on the patient’s comorbid conditions found in their list of diagnoses generated by coders, primarily for billing purposes. Capturing additional comorbid conditions not only increases revenue, it also improves risk-adjusted statistics by more accurately capturing the severity of the patient’s condition. Knowing this, hospital leaders presented with opportunities in risk-adjusted mortality will often claim that low performance is due solely to lower expected values resulting from different coding and documentation practices. They view the practice of maximizing comorbid conditions as ‘playing the game’ and not really improving performance.

When considering patient mix, such as the number of trauma patients, patients without insurance, and patients with multiple chronic conditions, hospital leaders will often claim they see more critically ill patients or patients that can’t be discharged in a timely manner when compared to their peers. This leads to increased observed mortality. These leaders need a better understanding of risk adjustment and how the development of custom compare groups allows for apples-to-apples peer comparisons.

How to use levers to promote improvement

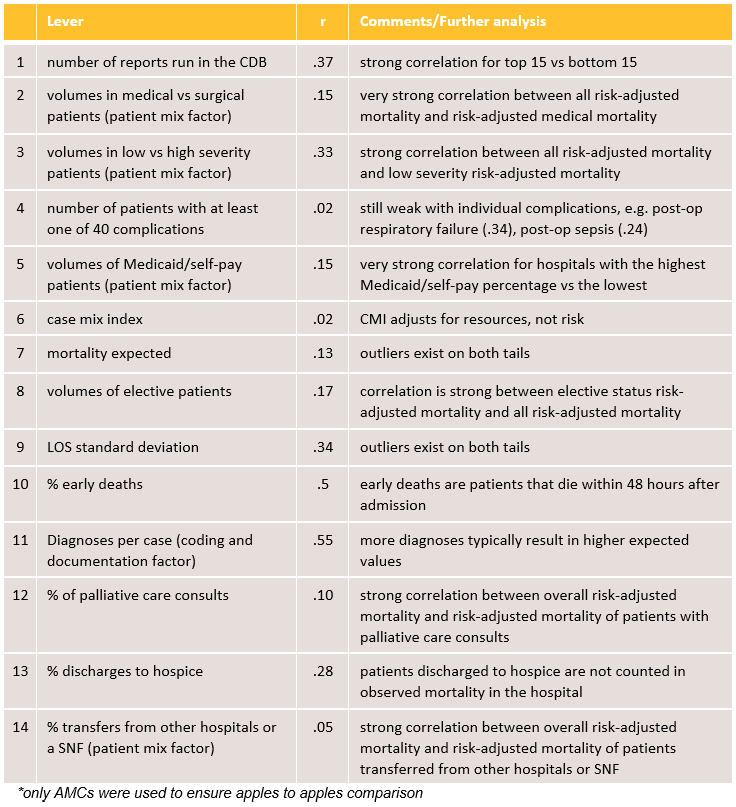

We set out to understand the relative value of 14 levers in improving risk-adjusted mortality. A lever is a factor that, when changed, will alter the result of another metric. For example, a large, urban academic medical center decided to substantially increase its use of palliative care for its oncology patients, specifically those with early stage metastatic cancer, to help patients and their families cope during their cancer journey. Over time, the hospital saw an increase in patient and physician satisfaction as well as a decrease in observed mortality.

Further, the table below dispels the myth that poor performance in risk-adjusted mortality is due simply to data concerns or levers beyond the control of the hospital. There is not a single variable examined that has a strong correlation on its own. Rather, the path to risk-adjusted mortality improvement is multifactorial and unique for every hospital.

Correlations between Risk-Adjusted Mortality in Major Service Lines and Individual Factors of Improvement

102 academic medical centers* (CY 2019)

In the table, all correlations (r) are low, meaning there is little association between overall risk-adjusted mortality and these individual levers, such as mortality expected (coding and documentation) and Medicaid/self-pay patients (patient mix). Typically, a strong correlation is greater than .7.

In the comments/further analysis column, we did find strong correlations in the top 15 Vizient member hospitals versus the bottom 15 hospitals participating in our Clinical Data Base (CDB) solution for certain levers.

For example, the number of analytic drill-down reports written were not correlated to Medicaid overall risk-adjusted mortality. However, when the number of CDB reports generated between the top 15 AMCs (average = 6,443 reports) was compared to the bottom 15 AMCs (average = 2,709 reports) in risk-adjusted mortality, the correlation was strong. This tells us that you are much more likely to be a top performer in risk-adjusted mortality when you produce more reports to understand the levers needed to achieve risk-adjusted mortality improvement.

In addition, strong correlations were seen when overall risk-adjusted mortality was compared to slices of the overall risk-adjusted mortality. This means that if a hospital was a top performer in overall risk-adjusted mortality, they were a top performer in any of the segments of risk-adjustment mortality. If they were a bottom performer on all, they were a bottom performer in all segments studied.

This held true for several patient mix segments such as transfer status, medical versus surgical, payer, socio-economic status and clinical condition. For example, hospitals that were good performers in overall risk- adjusted mortality were also good performers in sepsis risk-adjusted mortality, and risk-adjusted mortality of transfer patients from other acute care and skilled nursing facilities. This also makes sense because top hospitals perform well no matter the patient.

The bottom line

- Improving risk adjusted mortality cannot occur for most organizations by focusing on a single lever. Each hospital must determine for themselves the multiple factors they will need to focus on in order to improve risk-adjusted mortality.

- Dashboards are good as a first glance into performance but hospitals need a robust data source that can offer peer benchmarking and drill down capabilities to gain specific insights as to where changes need to occur to improve outcomes.

- A sophisticated data analyst, drilling into the data source is a requirement to understand the unique levers needed for their hospital.

About the author

Joseph Geskey, DO, MBA, MS-PopH, Principal, Data Science & Member Insights, contributed to this post.